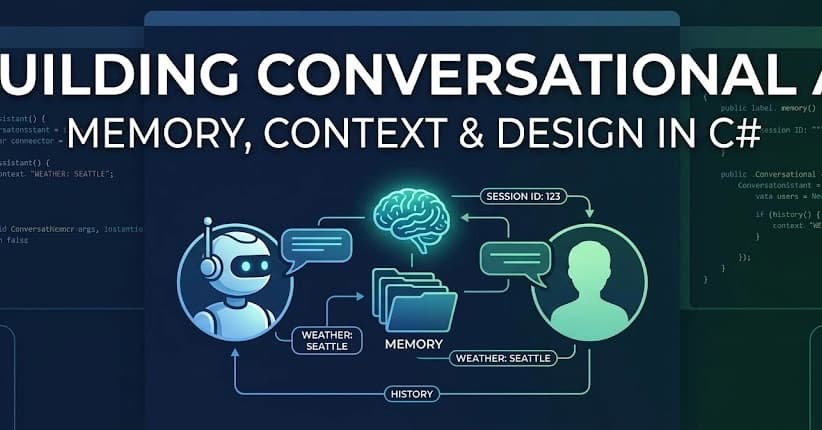

Building Conversational AI: Memory Patterns, Context Management, and Conversation Design

Chatbots are easy to build.

Chatbots are easy to build. Conversational AI that actually works is hard.

The difference? State management . A real conversation requires remembering what was said, managing context limits, and maintaining coherence across multiple exchanges.

In this article, we'll explore the patterns that make multi-turn conversations work in production C# applications.

User: What's the weather in Seattle? Assistant: It's 52°F and cloudy in Seattle. User: What about tomorrow?

Without conversation history, the model has no idea "tomorrow" refers to Seattle weather. Each API call is stateless—you must send the entire relevant conversation every time.

Let's start with a foundational conversation service:

Models have token limits. GPT-4o has 128K tokens, but that doesn't mean you should use them all:

The simplest approach: drop old messages until you fit:

For longer conversations, summarize old messages instead of dropping them:

The system prompt sets the foundation for every conversation. Here are proven patterns:

Here's a complete, production-ready conversation service:

In Part 4 , we'll tackle production patterns —observability, testing, cost management, and everything else you need to run LLM applications in the real world.

This is Part 3 of the "Generative AI Patterns in C#" series. Conversations are where AI meets real users—get the patterns right, and your application becomes genuinely useful.

For further actions, you may consider blocking this person and/or reporting abuse

https://dev.to/bspann/building-conversational-ai-memory-patterns-context-management-and-conversation-design-2i58